Strand 4:

Changes in geometry and algebra

via Dynamic Geometry Systems

and Computer Algebra Systems

Hans-Georg Weigand

Würzburg, Germany

|

Plenary lecture: |

Fitting from function families with CAS and DGS |

|

A microworld for helping students to learn algebra |

|

|

Teaching and learning geometry: Dynamic and visual |

|

|

Dynamic notions for Dynamic Geometry |

|

|

Self-correction in algebraic algorithms with the use of educational software: An experimental work with 13-15 years old pupils |

|

|

A CAS-index applied to engineering mathematics papers |

|

|

Improving maths skills with CAS technology: A CAS project carried out in Scotland with 16-17 year olds using TI-92s |

|

|

Integrating MuPAD into the teaching of mathematics |

|

|

Absolute geometry: Discovering common truths |

|

|

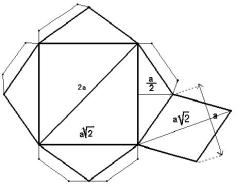

Magic polyhedrons |

|

|

Cubics and quartics on computer |

|

|

Expression equivalence checking in Computer Algebra Systems |

1. Some changes in mathematics learning and teaching are necessary

2. The strand addressed especially the following questions

3. Conclusions of Strand 4 on changes in geometry and algebra

1 Some changes in mathematics learning and teaching are necessary

We are convinced that some changes in mathematics learning and teaching are necessary to give students more benefit from mathematics lessons for their future life. We are also convinced that new technologies will give us the opportunity to move in this direction. In strand 4 we discussed such changes while using the most important technological tools DGS and CAS (shortened in the following to: DaC). These consist of:

changes in the contents of mathematics,

changes in the contents of mathematics,

changes in curricula,

changes in curricula,

changes in teaching styles and the

method of teaching

changes in teaching styles and the

method of teaching

changes in the way students learn

changes in the way students learn

changes in concept formation

changes in concept formation

changes in assessment,

changes in assessment,

changes in working styles,

changes in working styles,

.....

.....

2 The strand addressed especially the following questions

Contents of geometry and algebra

How can we reshape the contents of algebra and geometry programmes so that they have more immediate value to individuals? Can we identify explicit examples or ideas? What are the consequences for the curriculum? Which contents will take on increased importance, and which will decrease in importance?

Concept formation

With DaC, teachers can take new approaches to concept formation. Can these result in students gaining better understanding? What are the new difficulties? Will DaC give us an opportunity to make a better relationship between algebra and geometry?

Integration into the curriculum

For which students, and when, is it appropriate to introduce DaC? What are the benefits and the obstacles of an early use of DaC? What are the "by hand" skills, which should be retained?

Working styles

Will mathematical working styles change through the use of DaC?. Will this just be at the symbolic level, or will it affect graphical and numerical aspects. What is the relationship between working on the computer and working with paper and pencil?

Assessment

How will the means of assessment need to change when using DaC? Do we need new styles of test problems? Can traditional problems be modified for the "new" tests? Should DaC be allowed for every test?

If you see the literature, there are already a lot of proposals and suggestions for changes while using DaC: Reducing the complexity of hand-held calculations, giving more importance to experimental working, emphasising the modelling process and the interpretation of results - not "only" carrying out calculations, changes in assessment..... The goal of this workshop or strand was, to give an overview of the existing proposals for changes and to disseminate expected changes in algebra and geometry to teachers, math educators and administrators.

We were also interested in a close relationship of our strand to the discussion group for the 21st ICMI Study "The Future of the Teaching and Learning of Algebra"

(http://www.edfac.unimelb.edu.au/DSME/icmi-algebra/)

3 Conclusions of Strand 4 on changes in geometry and algebra

There was a wide spectrum in the topics of the lectures in strand 4 and if you ask for answers to the initial questions, you may be disappointed. But if you accept that it is impossible to answer questions concerning new technologies in general and when you are content with answers to special problems and questions, you may benefit from the discussions in strand 4. In the following there are a few conclusions and hypotheses for the future work with CAS and DGS, coming out of the presentations and discussions in strand 4.

We should put more emphasis on

CAS as a pedagogical tool in the classroom rather than a technical tool. We

have the possibility to explore algorithms, to visualise mathematical objects

and to illustrate procedures in new ways. The use of CAS and DGS allows us to

illustrate mathematical concepts and theorems, motivate applications and

re-introduce "tough" topics e.g. curvature.

We should put more emphasis on

CAS as a pedagogical tool in the classroom rather than a technical tool. We

have the possibility to explore algorithms, to visualise mathematical objects

and to illustrate procedures in new ways. The use of CAS and DGS allows us to

illustrate mathematical concepts and theorems, motivate applications and

re-introduce "tough" topics e.g. curvature.

The question of which kind of

CAS you use is of decreased importance. There are advantages of command-based programs

like MuPad or menu-based tools like Derive. It is not the way you use the tool

but the way you solve mathematical problems, which is important for the

development of mathematical thinking.

The question of which kind of

CAS you use is of decreased importance. There are advantages of command-based programs

like MuPad or menu-based tools like Derive. It is not the way you use the tool

but the way you solve mathematical problems, which is important for the

development of mathematical thinking.

The visual and dynamic

abilities of interactive DGS can support “preformal proving”. This kind of

proof gains a special new quality by using DGS, which may be called

“visual-dynamic proofs”.

The visual and dynamic

abilities of interactive DGS can support “preformal proving”. This kind of

proof gains a special new quality by using DGS, which may be called

“visual-dynamic proofs”.

The use of visualisations and

multimedia animations in the classroom are wonderful new possibilities of

learning mathematics. But they also may cause additional problems for the

students because they have to develop first the understanding of these kinds of

presentations.

The use of visualisations and

multimedia animations in the classroom are wonderful new possibilities of

learning mathematics. But they also may cause additional problems for the

students because they have to develop first the understanding of these kinds of

presentations.

There should be – and there

will be – a real interactivity between DGS and CAS. More about spreadsheets

should be included in this joint-venture program. It should be easy to

transform data from one program to the other.

There should be – and there

will be – a real interactivity between DGS and CAS. More about spreadsheets

should be included in this joint-venture program. It should be easy to

transform data from one program to the other.

With Cinderella or

Java-Sketchpad you can work with DGS over the Internet. In the future it will

be possible to work with DGS, CAS and spreadsheets over the Internet.

With Cinderella or

Java-Sketchpad you can work with DGS over the Internet. In the future it will

be possible to work with DGS, CAS and spreadsheets over the Internet.

It is still an open question

how to evaluate the level of difficulty of problems in assessment-tests, when

CAS are allowed. Some kind of “index” is needed to show the difference of the

level of a problem posed in a technology-free test or in a test where

technology is allowed.

It is still an open question

how to evaluate the level of difficulty of problems in assessment-tests, when

CAS are allowed. Some kind of “index” is needed to show the difference of the

level of a problem posed in a technology-free test or in a test where

technology is allowed.

Geometry should be studied more

from an experimental and inductive point of view. Especially DGS give the

chance to lay more evidence to this aspect. But students should also see

geometry as a formal system, a (local) axiomatic system, and a field of

exercise for proving,.

Geometry should be studied more

from an experimental and inductive point of view. Especially DGS give the

chance to lay more evidence to this aspect. But students should also see

geometry as a formal system, a (local) axiomatic system, and a field of

exercise for proving,.

Programs like Cinderella allow

us to contrast different models of geometry, especially Euclidean and

Non-Euclidean Geometry.

Programs like Cinderella allow

us to contrast different models of geometry, especially Euclidean and

Non-Euclidean Geometry.

Using computers enables us to

include objects into our lessons (at University) in an easy way, which are

described by algebraic equations of higher degree, for example cubics and

quartics.

Using computers enables us to

include objects into our lessons (at University) in an easy way, which are

described by algebraic equations of higher degree, for example cubics and

quartics.

CAS technology contributes to

the students’ learning and algebraic development but may also have a positive

effect on the development of maths skills. If students can make sense of the

mathematical procedures and get greater confidence in their work this will

result in a better understanding of maths skills.

CAS technology contributes to

the students’ learning and algebraic development but may also have a positive

effect on the development of maths skills. If students can make sense of the

mathematical procedures and get greater confidence in their work this will

result in a better understanding of maths skills.

In the future CAS and DGS will

have a tutoring component and may be extended to tutorial systems. But there is

still a lot to be done. The evaluation of the answers by students in the frame

of a tutoring system is a big challenge for the coming years.

In the future CAS and DGS will

have a tutoring component and may be extended to tutorial systems. But there is

still a lot to be done. The evaluation of the answers by students in the frame

of a tutoring system is a big challenge for the coming years.

Fitting from function families

with CAS and DGS

Jean Flower

Brighton, UK

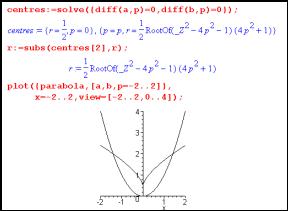

2. Curvature and fitting circles at a point on the parabola

3. Best fit circles at arbitrary points on the parabola

4. Curvature on handheld technology

5. Fitting circles to any curve

6. Fitting a best fit parabola

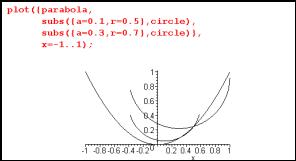

The use of CAS and DGS allows us to illustrate existing work (e.g. eigenvalues), motivate applications of existing work (e.g. computer graphics and animation) and re-introduce "tough" topics (e.g. curvature). While CAS and DGS are used to investigate or resolve a problem, questions arise. These provide an opportunity to integrate side-topics into the main train of thought, and to include reflective thinking and analysis as part of an assessment process. In this article, I have chosen one mathematical area as a vehicle for illustrating the kinds of potential opened up by using DGS and CAS. The algebra involved would be challenging by hand, but could be brought back into the classroom (first or second year university degree level) with CAS as an algebraic support.

The chosen example concerns the "best fit" from a family of functions to a given curve at a given point. To start with, the family of functions will be the family of lines, and the chosen function will be a parabola. Later sections extend to other choices of curves and families. What’s interesting is not only how the problems and topics can be approached and what kind of mathematics evolves out of the study, but also what kinds of questions are raised in the process. These questions are highlighted in the article.

1 Tangents

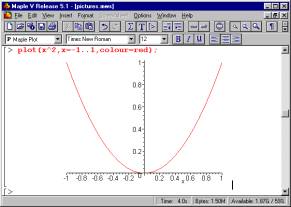

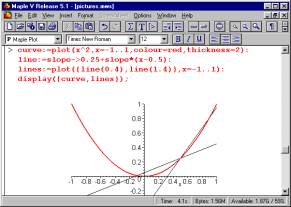

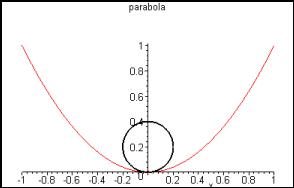

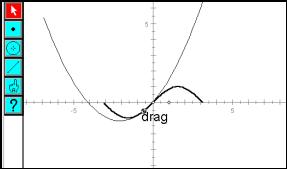

The initial chosen task is to find a "best fit" line out of the family of all lines - find the best fit line at the point (1/2,1/4) on the parabola given by y= x2.

Question: What's a "family of curves"? Introduce the concept of a "parameter".

|

|

|

|||

|

Fig. 1: Plotting a parabola in Maple |

Question: How can you find the slope of the chord as the length of the chord shrinks to zero? Concept: limits, L'Hopital's rule.

Question: How was the graph of f (x) = x2 drawn in Sketchpad? (as a locus of points) How were the tangent lines drawn in Sketchpad? (only after following up the algebra)

A classroom activity asking "Which line fits best" can generate a dialogue "That one!" - "Which one?" and steers towards a common language for describing lines. A line can be specified by its slope, given a point of intersection with the curve. More generally, two points on the line could be given. Or some students may be more comfortable with the notation "y = mx + c".

Questions: What are the relative merits of the different representations of candidate lines? How can we convert from one representation to another? How come in one representation we have to give four numbers, in another we specify two, and in another, only one is required? Concept: degrees of freedom

A competitive edge can be introduced by getting students to provide information via their calculators to a central resource base (see reference for TI-interactive), which could display the on-going "votes" on screen. Repeating the competition for different choices of the underlying curve gives an atmosphere in which students can practise using the different representations of a line to cast their vote. Of course, every competition needs a clear winner as an outcome.

Questions:

How can we decide the democratic outcome of an election in which each vote is a line?

Does it make sense to average the m's and the c's?

If we arrived at an average line, how could we find which of the cast votes was closest?

Does the average give the best fit line? How can we decide what the best fit line is, anyway?

Concept: alternative distances or metrics in the set of lines giving different winners. The undemocratic nature of mathematical correctness.

2 Curvature and fitting circles at a point on the parabola

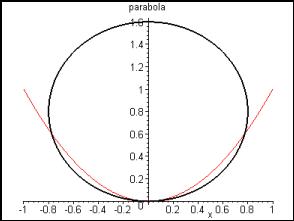

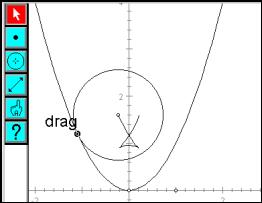

Calculus quickly helps us decide upon the best fit line from the family of lines. Extend the question to fit a circle to the parabola.

Question: How is the circle drawn in Sketchpad - as a function? using the toolbar?

Again, questions of representation arise. A circle can be specified by centre and radius, or by just a centre if a point on the circumference is given, or by a function.

Question: Which is the preferred representation of a circle? How many choices have to be made to specify a circle?

Using the circle toolbar button, a circle can be drawn with centre constrained on the vertical axis and circumference point at the origin. The size of the circle can be altered, ranging from circles, which are too small to those, which are too large. Some intermediate value must be "best".

|

|

|

|||

|

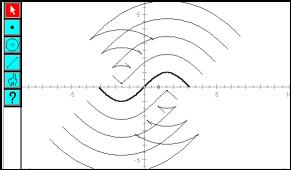

Fig. 7: Dynamic tangent circles in Sketchpad |

Fig. 8: The locus of centres with a small fixed radius |

Question: If students were to vote on the best fit circle, to get a feel for what should be roughly the "right" size, where could we expect the centres to lie? Is there a meaningful concept of an "average" circle? Or the vote that is "closest to average"?

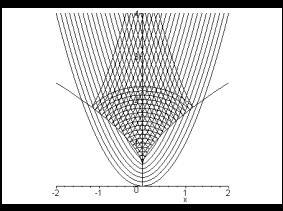

To vary the point of contact with the parabola, use centres of best fit circle candidates, which lie on the normal to the parabola. This leads to questions about the normal to a curve, and the relationship between the normal to a curve and the tangent. Figure 7 shows DGS being used to dynamically change the radius of a circle at a fixed point with the parabola.

The same diagram, if carefully constructed, can be used to take an alternative slice through the family of circles? Figures 8 and 9 show DGS used to fix the radius and allow the common point to drag along the parabola. With a small, fixed, radius, the centre of the circle traces a u-shaped curve (not a parabola) inside the parabola. For a larger radius, locus gains two "kinks" (points at which the curve has no tangent) as the point on the parabola moves. These kinks must appear at some intermediate radius.

Question: What is the radius value, which is the smallest to produce a "kink" (a lack of smoothness in the locus)?

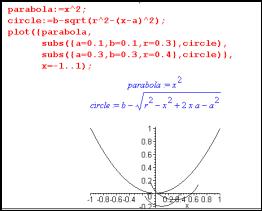

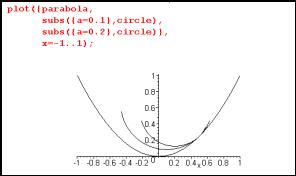

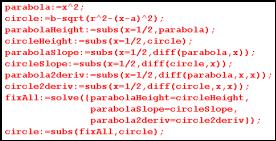

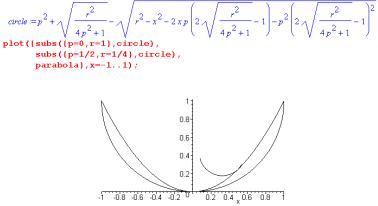

To investigate using Maple, set up expressions for the parabola and a circle. The circle expression is

![]()

where the centre is at (a,b) and the radius is r.

Question: Where did this algebra come from?

To plot instances of the circle, use the subs(…) function to substitute values into circle for a, b and r.

|

|

|

|||

|

Fig. 9: The locus of centres with a large fixed radius |

Fig. 10: Loci of centres for a range of radii |

|||

|

Question: The parabola and circle are, strictly speaking, expressions in Maple. To define the parabola as a function, we would write p:=x->x^2; What difference would this make? The circle expression, plotted against x, gives the lower semicircle, which will provide a better fit to the parabola than an upper semicircle. Question: Will it always be obvious whether to pursue the lower or upper semicircle for the best fit? |

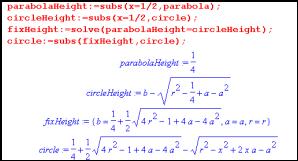

To find the best fit circle to the parabola point (1/2, 1/4), limit the family of circles to those, which pass through this point. Substitute the value x = 1/2 into the expressions for parabola and circle. Think of this as finding the "heights" of the graphs at x = 1/2. The solve facility is used to determine a condition on the parameters a, b, r. The parameter b is found in terms of the other parameters a and r. When this value for b is substituted into the circle expression, the family which used to depend upon r, a and b now only depends upon r and a. All circle graphs in the family pass through (1/2,1/4).

|

|

|

|||

|

Fig. 12: Maple algebra for matching the heights at (1/2,1/4) |

Question: Why was it the symbol b that was eliminated at this stage, rather than the alternatives r or a? Could we force Maple to follow an alternative choice?

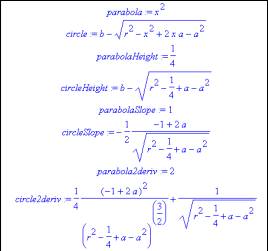

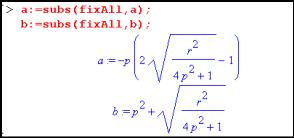

Once the family of circles is restricted to those which pass through the point (1/2,1/4), a further restriction can be made which forces the slopes of the parabola and circle to agree. The slopes are found using the inbuilt diff function for differentiation. The parameter r becomes expressed in terms of a, and the circle family now has only one degree of freedom, through the parameter a.

|

|

|

|||

|

Fig. 14: Maple algebra for matching the slopes at (1/2,1/4) |

All members of the circle family are now tangent to the parabola at (1/2,1/4).

Question: Why did r become expressed in terms of a, instead of vice versa? Could we control the algebra to eliminate the parameters in a different order?

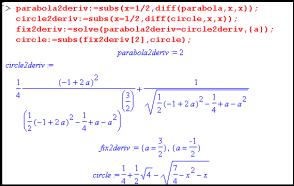

One final step allows us to fix the "best" circle as the one, which gets agreement for the second derivatives. The calculation for the second derivative is done using the diff( ) function, and yields a rather unpleasant-looking expression for the circle. However bad the algebra looks, the process has succeeded in producing a best fit circle to the given parabola point.

|

|

|

|||

|

Fig. 16: Maple algebra for matching the second derivative at (1/2,1/4) |

The family of circle has reduced to a single circle, with equation

![]()

Question: Why didn't Maple reduce the square root of 4 to 2, simplifying the expression?

|

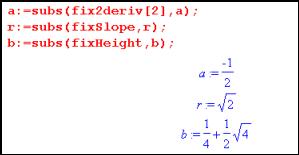

The conditions that have been found for the matching height, slope and second derivative can be used to find values for the centre co-ordinates and radius of the circle. Once we have a definitive result for the best fit, the votes in the competition can be reconsidered and the "best" vote found. |

|

|||

|

Fig. 18: Best fit circle parameters for (1/2,1/4) |

Question: Which of the circle votes were closest to this definitive best fit circle?

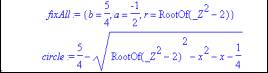

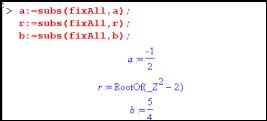

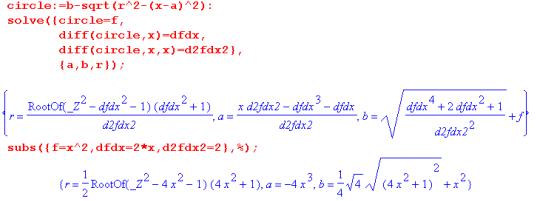

All of this work was done on Maple by copying the process we might take if we were to tackle the algebra ourselves. Maple is more powerful than we are in handling large expressions, and it's possible to challenge the program to take on more at once:

|

Fig. 19: Maple commands for finding the best fit circle quickly |

|

|

|

Fig. 20: The working for fitting at (1/2, 1/4) |

Build all the expressions needed for comparing heights, slopes and second derivatives. The solve() command can handle multiple simultaneous equations.

Question: What are the relative merits of working through a problem slowly in Maple compared to using fewer steps? Which is the preferred approach?

|

The values for a, b and r can be found, as before, using the three constraints for height, slope and second derivative, as shown in Fig. 21 |

|

|||

|

Fig. 21: Best fit circle parameters revisited |

The expression for the value of r in Figure 21 includes the phrase RootOf. RootOf(f (_Z)) means the (set of) possible value(s) of _Z as roots. For example, RootOf(_Z - 1) is the value +1, and RootOf(_Z 2 - 2) gives alternative values ±Ö2. The appearance of this forbidding notation shows that the choice between our two approaches can affect the appearance of the outcome.

Question: Why do the two approaches give different triples for a, b and r:

![]()

It's always worth trying the simplify() command when confronted by a complicated algebra expression. In this case, simplify(circle) does produce the earlier expression.

Question: Why doesn't Maple automatically simplify all its output?

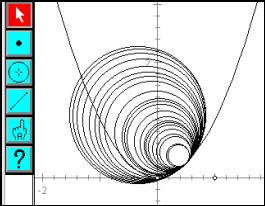

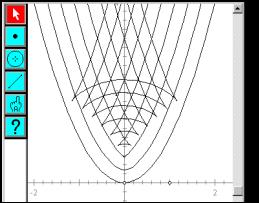

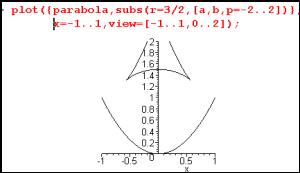

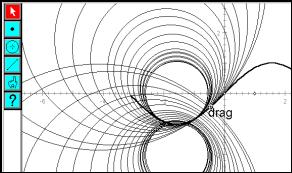

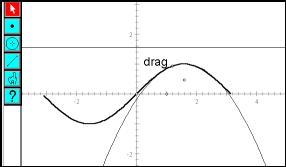

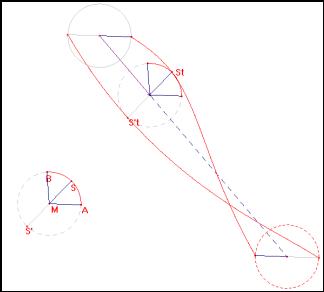

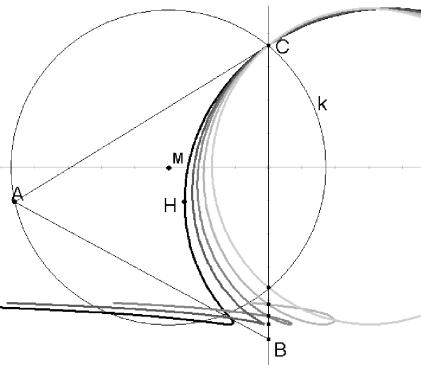

3 Best fit circles at arbitrary points on the parabola

To study the best fit circle at an arbitrary point along the parabola, introduce variables p and q for the co-ordinates of a point on the parabola. The expressions for the parabola and circle are the same as before, and the process of finding matching height and slope is the same as before. The work we have to do is no more complex than for a single point (1/2,1/4), but the algebraic computation becomes trickier.

The derived expression for the circle is used to plot a tangent circle at any point on the parabola:

|

|

|

|

|

|

|

Fig. 22 |

The centre of any tangent circle is found in terms of the parameters p (for the tangent point) and r (for the circle radius)

|

|

|

|||

|

Fig.23a: Centre co-ordinates for an arbitrary tangent circle |

Fig.23b: The locus of centre as the radius changes |

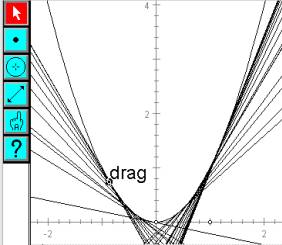

Fig. 23 shows a and b plotted to give a parametric curve as r changes - the locus of centres of circles at a fixed point for fixed p (value = 1/2) with varying radius r. This locus is a straight line - a segment along the normal to the curve.

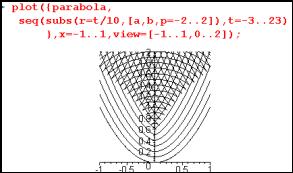

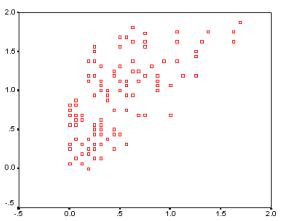

Fig. 24 shows a more interesting curve, created by varying p and leaving r fixed (value 3/2). This is the locus of centres as a circle of fixed radius moves along the parabola.

|

|

|

|||

|

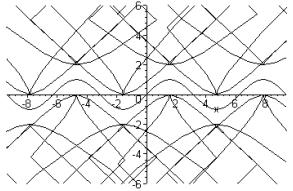

Fig. 25: Centre loci for a range of radii |

Fig. 25 shows the family of centre loci for fixed radius; fix the radius to be a family of values from 3/10 to 23/10. It recreates the DGS loci shown in Fig. 10. Where are these "kink" points? (Points at which the curve is non-differentiable) Given the parametric points (a, b), with parameter p imagine a point travelling along the locus as p changes. The kink shows that the point stops moving to the right, turns round, and starts moving to the left. It also stops moving upwards, turns round, and starts moving downwards. The expressions for a and b must have stationary points.

|

|

|

|||

|

Fig.26a: The locus of kinks |

Fig.26b |

More Maple work can be done to match the second derivatives and derive best fit circle centres. Plotting the best fit circle centres matches the "locus of kinks". The algebra can be seen to be the same, as illustrated here in Sketchpad.

Using the second derivative as the definition of "best fit" is proved to match the more intuitive definition of "critical radius" - a radius that produces a kink in the locus of centres for fixed radius.

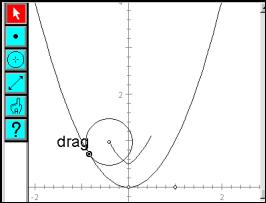

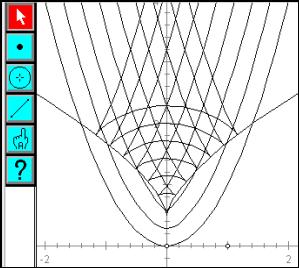

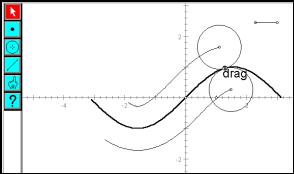

4 Curvature on handheld technology

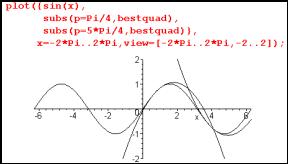

It would be easy to imagine that the Maple program, running on a powerful PC, is capable of this kind of algebra where handheld technology could struggle. As shown below, the TI89 has no problem in reproducing this work. The following screen dumps are taken from the inbuilt text editor to tell the story interspersed with command lines. The resulting algebra is in some cases too large to fit on the screen.

|

1. |

2. |

3. |

|

|

|

|

|

|

|

|

|

|

|

|

Questions: Why solve for b? Is there a better way to refer to the solution for b, other than copying and pasting from the home screen? |

Question: If b has been given a value, is c(…) still a function with four parameters? |

||

|

4. |

5. |

6. |

|

|

|

|

|

|

|

|

|

|

|

|

Question: Why are there two results for a? What if we had chosen to solve for the parameters a, b and r in a different order? |

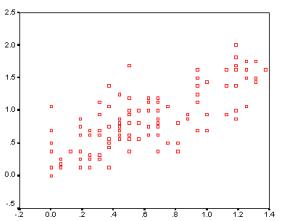

Question: Which solution for a is the right one to use for the next step? Use a parametric plot to fix values of r (values fixed as 0.3, 0.4, .., 1.3) |

Plot the centre co-ordinates (a,b) as p changes. Question: why is it necessary to use xtemp and ytemp here? |

|

|

7. |

8. |

9. |

|

|

|

|

|

|

|

|

|

|

|

|

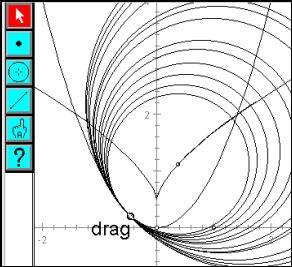

Question: Why not type solve(d(d(f(x),x),x)=d(d(..? instead of evaluating the derivatives and copying them into the solve expression? |

Question: Why did the solve ( ) command give multiple results? Which one was chosen and why? |

Question: Would the plots appear faster if these expressions were evaluated on the home screen and the output pasted into the Y= screen? |

|

|

10. |

|

11. |

|

|

|

Question: Why do these circles have gaps at the sides? |

|

|

|

|

Would there be any way of plotting the circles to avoid this problem? |

|

|

5 Fitting circles to any curve

The Maple algebra can be generalised to fit circles to any curve, giving curvature formulae for the best fit centre and radius.

|

|

|

|

This result for the general best fit radius and centre can be written as

|

|

|

|

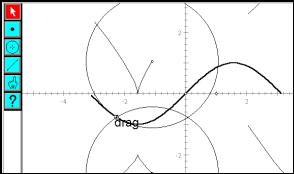

This could be applied, for a different example, to fit a circle to a sine wave:

|

|

Question: How can we create an image of the sine wave? How can the derivatives of the sine curve be found in Sketchpad, for plugging into the curvature expressions? Fig. 28 shows the locus of centres of the best fit circles. |

|

Fig.28: Best fit circles to a sine wave |

|

|

|

|

|

Fig. 29: Best fit circle and centre locus |

Fig.30: Loci of centres for a fixed, small, radius |

|

Fig. 31: Loci of centres for a fixed, large radius |

Fig.32: Loci of centres for a range of fixed radii |

Figure 30 to 32 show the loci of centres of circles with a fixed radius. Again, we see the introduction of non-differentiable points (kinks) for larger radii.

Question: Will such kinks always occur for any choice of curve?

|

|

|

|

Fig. 33: Algebra for curvature of sine waves |

Fig. 33 shows the kind of algebra that Maple produces in the analysis of fitting circles to a sine wave. The algebra is forbidding and gives the impression that we would never be able to recreate the result by hand. The commands typed in, however, are no more complex than used when fitting circles to parabolae.

Question: Could we recreate such forbidding algebra by hand? Would we want to? What would that teach us?

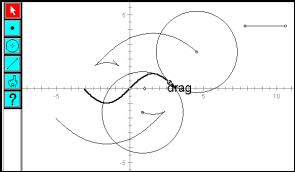

6 Fitting a best fit parabola

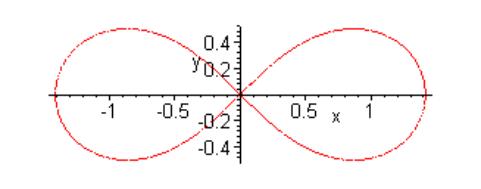

The construction of the parabola in Figure 35 was done by plotting the function

![]()

The responsiveness of the image is limited by the amount of calculation to be done at each stage, as the point is dragged along the sine wave. The construction of the circle as a locus of equidistant points gave a smoother outcome in the curvature work. To improve the responsiveness of the best fit parabola, it could be possible to reconstruct the parabola as a locus of equidistant points between a focus and directrix. This drives some more algebra. How can we convert from the quadratic polynomial representation of the parabola to a focus and directrix? The focus is a point somewhere in the plane, and the directrix is a horizontal line, specified by its height.

The quadratic x2 has focus at (0, ¼)) and directrix at height –¼ . In order to generalise to an arbitrary quadratic, we need to understand the roles of the coefficients a, b and c.

|

|

|

|||

|

The approach used to find a best fit circle can easily be applied to find best fit parabolae. The images can be constructed statically or dynamically. But one issue arises which leads back to paper and pencil constructions of parabolae. |

|

|||

|

|

Fig. 35 a-c |

|

Completing the square gives |

|

|

|

The parabola has been shifted by |

|

and scaled vertically by a. |

|

The focus becomes |

|

and the directrix at height – ¼ a. |

Question: How does the notion of shifting and stretching change the algebra for focus and directrix?

|

|

|

|||

|

Fig. 36: Parabola plotted from directrix and focus |

Fig. 37: Focus locus for best fit parabolae |

7 Conclusions

This work was about fitting from function families. It could have been about many other topics - the issue under consideration is to think about the experience of doing mathematics aided by CAS and DGS. The questions raised make links from this specific investigation about to wider issues from both geometry and algebra:

alternative algebraic representations; metrics on spaces; smoothness; loci, paper and pencil constructions; degrees of freedom; Taylor series, limits; strategy in algebra work; tangents and normals; families and parameters; Cartesian and parametric forms for graphs; multiple solution sets; correctness; effects of scaling and stretching on algebra; where algebra comes from;…

The most commonly phrased questions are of the form:

Why did the technology do this? / How to make the technology do

that…?

Why did the technology do this? / How to make the technology do

that…?

How to decide between…

How to decide between…

How to find …

How to find …

Does it make sense to…

Does it make sense to…

Consideration of these questions helps to create a coherent understanding of the subject. Even the questions, which appear to relate directly to understanding the technology, instead of the maths, often disguise mathematical ideas about dependency between objects, or using different expressions for the same thing.

We could set ourselves the task of following behind the CAS tools, trying to match their algebraic techniques and repertoire by hand. An alternative approach opens up higher conceptual questions about how topics link together, dependency between mathematical objects, steps in mathematical investigation and proof. It could be argued that in this study of curvature, algebra has been acting as the tool for investigation rather than comprising the focus of the task in hand. What drives the work forward is curiosity about the algebraic expressions and the images produced.

The questions that have been emphasised in this work could act as part of an assessment strategy. Students could be challenged to come up with questions. A fun way to present the rich structure of ideas could begin with a description, as given here, on a web page. Students could add their questions into the text as hyperlinks to their own pages with discussions of the different points raised. Both Sketchpad and Cabri can produce Java scripts, and so dynamic images can form an integral part of work on a web page.

8 Resources

|

Technical: |

|

|

TI89 syntax: |

http://education.ti.com/product/tech/89/guide/89guideus.html |

|

TI interactive: |

http://education.ti.com/product/software/tii/features/features.html |

|

Maple CAS: |

|

|

Geometer's Sketchpad: |

|

|

Java Sketchpad: |

|

|

A paper including work on fitting tangents: |

|

|

Jean-Baptiste Lagrange: "A didactic approach of the use of CAS to learn mathematics" CAME '99 at |

|

|

|

|

Acknowledgement. Thanks to Adrian Oldknow and Warwick Evans for inspiring me to take CAS on board, years ago. Thanks to TI for the calculator, and to Paul Harris for his help with Maple syntax.

A microworld for helping students

to learn algebra

Denis Bouhineau, Jean-François Nicaud, Xavier Pavard, Emmanuel Sander

Nantes, Paris, France

2. What can be a microworld for algebra

3. Main actions of the editor of an algebraic microworld

5. The Aplusix microworld for algebra

6. Domain of validity and discussion

This paper describes the design principles of a microworld devoted to the manipulation of algebraic expressions. This microworld contains an advanced editor with classical actions and direct manipulation. Most of the actions are available in two or three modes; the three action modes are: a text mode that manipulates characters, a structure mode that takes care of the algebraic structure of the expressions, and an equivalence mode that takes into account the equivalence between the expressions. The microworld also allows to represent reasoning trees. The equivalence of the expressions built by the student is evaluated and the student is informed of the result. The paper also describes the current state of the implementation of the microworld. A first prototype has been realised at the beginning of February 2001.

1 Introduction

Mathematical microworlds have been developed since the late sixties. The first one was Logo (Papert 1980) devoted to recursive programming and geometry. The concepts of mathematical microworld have been established in the mid and late eighties (Thompson 1987, Laborde 1989, Balacheff and Sutherland 1994), and the main features have been exhibited: objects, relationships, operators and direct manipulation. Several microworlds have been realised in geometry and algebra. Some of them are distributed has commercial products and have (or have had) a real success, like Logo and Cabri Geometry (Laborde 1989). The last one has been implemented on pocket calculators and distributed as computer software by Texas Instruments. Microworlds for geometry provide a real contribution to the learning of the domain and have introduced a new concept of geometry called dynamic geometry.

In this paper, we consider microworlds for formal algebra. Algebraland (Foss 1987) and the McArthur’s system (McArthur e.a. 1987) can be seen as the first ones. Others software for algebra, like those devoted to the modelling of word problems (Koedinger e.a. 1997), are not concerned here. In our previous works, we realised what we consider as second order microworlds for algebra. Ours systems were advanced prototypes (Nicaud e.a. 1990, 1994). We conducted a lot of experiments and analysed students’ behaviour and learning using these systems (Nguyen-Xuan e.a. 1993, 1999). We are now designing and realising what we consider as a first order microworld for algebra. This system aims at becoming a commercial product. In the future, we will combine the features of first and second order microworlds.

The fundamental question is: What can be a microworld for algebra? In this paper, we define first and second order microworlds. Then we detail first order microworlds and describe the mains specifications of the system we develop and the current state of its realisation.

2 What can be a microworld for algebra

According to Laborde (1989) a microworld is a world of objects and relationships. There is a set of operators able to operate on the objects to create new ones with new relationships. Direct manipulation has an important place in microworlds. Balacheff and Sutherland (1994) emphasise on an epistemological domain of validity providing the relationship between the representations and what they intend to represent. Thompson (1987) tells that mathematical microworlds must facilitate the process of constructing objects and relationships and focus on the construction of meaning. They must use a model not embedded in the curriculum, they must not provide instruction.

In formal algebra, as said above, the concrete objects are expressions. The basic relationship between the objects is the structural relationship over the expressions: 12 is the first argument of the second argument of

![]()

The second relationship is the equivalence relationship. There are two main sorts of operators.

The first sort operators allow building any well-formed expression: they are the operators of algebraic expression editors. We term first order the algebraic microworlds that focus on these operators. First order algebraic microworlds are included in many word processing systems. They are called equation editor. We will show below that most of them have a poor relationship with the semantic objects of algebra. The McArthur’s system (McArthur e.a. 1987) has feature of a first order algebraic microworlds.

The second sort operators are the transformation rules that allow building new steps in a reasoning process. We term second order the algebraic microworlds that focus on transformation rules. Several second order algebraic microworlds have been realised in the past (Bundy and Welham 1981, Foss 1987, Thompson 1989, Oliver and Zukerman 1990, Beeson 1996). The previous systems we developed in our team were of that class. Students solved problems by applying transformation rules to selected sub-expressions. They were not allowed to build a step another way. The community did not really consider these systems as microworlds, may be because they teach (something refused by Thompson), may be because they do not have the first sort operators, may be because people who realised these systems emphasised more on the tutoring aspects.

In the rest of the paper, we focus on first order algebraic microworlds we just call algebraic microworlds. In section 3., we consider the classical features of an editor for an algebraic microworld. In section 4., we tackle the issue of direct manipulation.

3 Main actions of the editor of an algebraic microworld

Objects and the structural relationship

A microworld must allow the user to create objects. In the case of algebra, this means to have an editor for algebraic expressions. The visual fidelity imposes the usual 2D representation for the interface of the editor. This representation contains text parts that can be seen as successions of characters

|

like 2x+1 |

and bloc parts well delimited like |

|

and |

|

The conceptual fidelity imposes to take the structural relationship into account. So, we propose to give to students well-formed expressions, as much as possible. For that purpose, expressions with missing arguments are completed with something, for example “?” (e.g., typing + at the end of 2x provide 2x + ?) and expressions that cannot be completed are highlighted (as spelling errors in MS-Word).

The main actions of an editor are: input, delete, select something, apply an operator to something, copy something, delete something, cut something, paste something. Let us consider these actions through the structural relationship. This implies that the above something is a sub-expression. We call the structure mode, a mode where well-formed sub-expressions are preserved during the actions and text mode, a mode where well-formed sub-expressions are not preserved. Note that the two modes are useful, the former to avoid destroying what is already built, the later to let the user be able to do everything.

Proposals for the implementation of the actions

Input from the keyboard (or input by clicking a button), when the expression has an insertion point: in order to keep a well formed expression, the input of a text operator adds “?” when necessary; in the structure mode, the input of a bloc operator adds “?” for each argument; in the text mode, the input of a bloc operator uses the environment for the arguments (e.g., typing / after x in xy provides x/y).

Select a sub-expression: the structural relationship suggests selecting only well formed sub-expressions. Note that 2x + 4 in 2x + y + 4 and –mp in 1 – 2mxp are well-formed sub-expressions. The natural idea for the selection is to consider the smallest sub-expression that includes the dragged area. Such actions were implemented in our previous prototypes and were appreciated by the students and the teachers.

Reasoning and the equivalence relationship

An important activity in formal algebra consists of solving problems by successive transformations of expressions, with conservation of the equivalence. After the edition of expressions we have to consider the representation of the reasoning processes for an algebraic microworld. A reasoning process contains not only successive transformations of expressions, with conservation of the equivalence, but also backtracks and sub-problems. A backtrack is sometimes realised when solving a problem. It consists of going back to a former step to try another way to solve the problem (so the steps have the form of a tree). A sub-problem is sometimes extracted and solved to help to solve a problem. Given a problem <T e>, T being the type and e the expression, the most frequent situation for a sub-problem consists of having a problem <T1 e1>, where e1 is a sub-expression of e, such that the solved form of the sub-problem may benefit to the original problem. For example, <solve A=0>, when A is a polynomial having a degree higher than 1, benefits from the resolution of the sub-problem <factor A>.

We consider that an algebraic microworld must represent reasoning processes, the equivalence between expressions, backtracks and sub-problems.

Propositions for the implementation of reasoning processes

In our previous prototypes, we implemented all the above features. The link between equivalent steps was an arrow and corresponds to a tutored action. We think now that it would be more accurate for an algebraic microworld to use a double arrow meaning “these expressions are equivalent”. Backtrack was implemented by the possibility to have several successors to any step. We think good to keep that. Sub-problems were linked to problems with a special arrow. We think now that it would be better to solve sub-problems on other places of the sheet or on other sheets. As for the expressions, the system must highlight the expressions that are not equivalent.

4 Direct manipulation

In a microworld, direct manipulation allows to displace objects taking into account the relationships. Expressions, sub-expressions and operators can be manipulated through the structural and equivalent relationships or without taking care of these relationships.

Drag & drop a sub-expression

A selected sub-expression s can be dragged & dropped inside an expression e as a word in a word processing system. Such action changes the expression. Let us call u the expression obtained. In the structure mode, the constraints we consider comes from the structural relationship, they are: (1) u is a well formed expression, (2) s is a sub-expression of u, (3) sub-expressions of e that does not include s are left unchanged. Note that these constraints introduce sometimes parentheses around s. In the text mode, the only constraint is the first one and parentheses are never added. When s is a sub-expression of u, s is still selected and a new drag & drop may be performed. In the text mode, when s is not a sub-expression of u, there is no selection in u at the end of the action, see an example in section 5, Fig. 3. The aim of drag & drop in these two modes is to allow the user to build the expression (s)he wants to.

The aim of drag & drop in the equivalent mode is very different; it is to maintain the equivalence. So we add constraint (4) u must be equivalent to e. With constraints (1) to (4), the only general move that can be done consists of displacing an argument of a commutative operator. To get a more powerful equivalent drag & drop, we withdraw constraint (3) so that sub-expressions of e that does not include s may be changed.

Let us take as an example the expression (y-1)(x+x2), select the first occurrence of x and drag it between the two parentheses. We may expect to get (y-1)x(1+x) which is a factorisation. An explanation for factoring in such context may be that the moved expression is an argument of a sum, which is an argument of a product, the action being performed as “get x as a common factor of the sum and insert it as a factor of the product”. Note that it is necessary to withdraw constraint (3) for that, because x2 has been changed in x.

5 The Aplusix microworld for algebra

Our team planed to realised a first order algebraic microworld in September 2001. The Aplusix microworld will include two main activities: first to allow the student to build expressions, second to allow the student to solve a problem on an algebraic expression. A first prototype has been realised at the beginning of February 2001 and a first experiment is planed for mid 2001. The recording of the interactions is already implemented. Every elementary action is recorded in order to analyse the students’ behaviour in the laboratory by hand or by programs. The first experiments will be conducted with 15 years old students in the mid 2001 in order to evaluate the two activities.

The development is realised with Borland Delphi for windows. It current state is described here.

The 2D display of expressions is implemented with the following operators: + - ´ / ^ Ö = ¹ < > £ ³ and or not. The selection, including the selection of several arguments of a commutative operator) is implemented (see Fig. 1). Insert, delete, copy, cut, past are implemented in the text and structure modes.

The display of the reasoning tree is implemented (Fig. 3). The evaluation of the equivalence of two expressions A and B is realised when A and B are polynomials of one variable and when A and B equations with one unknown and a degree less than 4.

The direct manipulation is implemented in the text and structure modes (Fig. 2). The equivalent drag & drop is implemented only for moving an argument in a commutative operator. A complex equivalent drag & drop is not necessary for our first experiments with 15 years old students and needs a deeper reflection; our goal is not to implement a pretty mechanism but a mechanism having an epistemological value.

![]()

Fig. 1: Display of an expression in the usual 2D representation with selection

|

|

|

|

|

Fig. 2: A drag & drop. The selected expression

(on the left) is dropped between 2 and y |

||

|

|

|

|

|

Fig. 3: A reasoning tree with non equivalent expressions |

||

Later realisations in the framework of our first order algebraic microworld will include: (1) the representation of new operators, a column operator to represent systems of equations in the usual form, å, ò, etc., (2) a powerful equivalent drag & drop (3) the manipulation of fraction bars and radicals, (4) the representation and display of sub-problems, (5) the extension of the evaluation of the equivalence (we will use results of applied mathematics and constraint programming), and (6) a redisplay function. Of course, we will also add features or realise modifications suggested by the experiments.

We plane to combine next this microworld with our previous works, adding transformations rules, knowledge to solve problems, to evaluate students’ resolutions, to explain, to provide hint.

6 Domain of validity and discussion

To conclude, we would like to discuss the domain of validity as emphasised by Balacheff & Sutherland (1994) who defined fours dimensions in mathematics for the epistemological domain of validity:

The first dimension is the set of problems, which the microworld allows to be proposed. For the building expression activity of the Aplusix microworld, it is any well-formed expression using the operators and the constants included in the system and respecting the types. For the reasoning activity, the set of problems is constituted by any problem concerning an algebraic expression that belongs to the class of expressions the system is able to compare for the equivalence relationship. It is currently the polynomials of one variable with any sort of form and any sort of coefficients and equations with one unknown having a degree less than 4. Other classes of expressions will be added later. Note that this is independent of the problem type (factor, expand, solve, etc.). The problem type must just be an algebraic problem type, i.e., must be such that a problem is invariant when the expression is replaced by an equivalent expression.

The second dimension is the nature of the tools and the objects provided by its formal structure. The objects of the Aplusix microworld are algebraic expressions as they are defined in the rewrite rule theory (Dershowitz and Jouannaud 1989), which is the most achieved theory on expressions. The nature of the tool is to allow to build expressions and to allow comparing expressions according to the equivalence relationship.

The third dimension is the nature of the phenomenology over its formal structure. The phenomenology at the interface of the Aplusix microworld has a maximum of fidelity concerning the representation of expressions. It is our permanent first principle for the interface: implement the usual 2D representation. Concerning the edition features, as exposed in this paper, we defined them using fundamental concepts of the domain (the structure and equivalence relationships). The display of the equivalence is a natural phenomenon as the equivalence is a fundamental feature of any algebraic reasoning.

The fourth dimension is the sort of control the microworld makes available to users and the feedback provided. For the building expression activity, the student may execute any editing action. The major difference with other editors is that ill-formed expressions are highlighted. Most of the time, actions that lead to incorrect expressions are completed in order to get a well-formed expression (instead of refusing the action). This is a form of feedback. When the student disagrees with the interpretation of the system, (s)he can undo the action. For the reasoning activity, the student may produce a new step at any time and has the indication of the equivalence as fundamental feedback. This category of feedback, already realised by McArthur (McArthur e.a. 1987) in an elementary context, has not been deeply experimented. We are impatient to observe how it can benefit to the students’ learning.

Balacheff & Sutherland (1994) also evoked a didactic domain of validity for microworlds. On that issue, we will just indicate two points. First, the Aplusix microworld is fundamentally an algebraic world, not a pre-algebraic one. For example, there is no subtraction in it. The minus sign is a unary symbol ( a-b is the sum of a and the opposite of b). So students who are not ready to be plunge into a strict algebraic context will probably not benefit from the use of the system. Second, the system can be parameterised by the user or the teacher. A text file contains the description of the operators. It is possible to activate or deactivate an operator; it is possible to change some feature of an operator. For example, one can allow or not variables in a denominator or in a square root. These parameters allow to custom the environment to a particular level.

We have designed an algebraic microworld an epistemological way, and we are ending the first prototype. We hope that most of our choices will be good, but we are not sure. The answer will come from the users and we will analyse carefully the discrepancies between the behaviour of the system and the user expectations, in order to correct minor problems and to learn from the major ones and correct them too. Major problems, and the lesson they provide, will be published.

References

Adobe FrameMaker V5.0 http://www.adobe.com/products/framemaker/main.html

Balacheff, N., Sutherland, R. (1994) Epistemological domain of validity of microworlds, the case of Logo and Cabri-géomètre. Lewis, R., Mendelsohn, P. (eds.) Proc. of the IFIP.

Beeson, M. (1996) Design Principles of Mathpert Software to support education in algebra and calculus. Kajler, N. (ed.) Human Interfaces to Symbolic Computation. Springer.

Bundy, A. and Welham, B. (1981) Using Meta-level Inference for Selective Application of Multiple Rewriting Rule Sets in Algebraic Manipulation. Artificial Intelligence vol 16, no 2.

Dershowitz, N., Jouannaud, J.P. (1989) Rewrite Systems. Handbook of Theoretical Computer Science, Vol B, Chap 15. North-Holland.

Foss, C.L. (1987) Learning from errors in algebraland. IRL report No IRL87-0003.

Graphing Calculator V1.2 http://www.pacifict.com/

Koedinger, K.R., Anderson, J.R. (1997) Intelligent Tutoring Goes To School in the Big City. International Journal of Artificial Intelligence in Education 8, 30-43. 1997.

Laborde, J.M. (1989) Designing Intelligent Tutorial Systems: the case of geometry and Cabri-géomètre. IFIP Working Conf. Educ. Software at the Sec. Educ. Level, Reykjavik, 1989.

McArthur, D. Stasz, C., and Hotta, J.Y. (1987) Learning problem-solving skills in algebra. Journal of education technology systems 15, 303-324.

Microsoft Equation V3.0 http://www.mathtype.com/msee

Nguyen-Xuan, A., Nicaud, J.F., Gélis, J.M., Joly, F. (1993) An Experiment in Learning Algebra with an Intelligent Learning Environment. Actes de PEG'93, Edinbourgh.

Nguyen-Xuan, A., Bastide, A., Nicaud J.F. (1999) Learning to match algebraic rules by solving problems and by studying examples with an intelligent learning environment. Proc. AIED’99.

Nicaud, J.F. Aubertin, C., Nguyen-Xuan, A., Saïdi, M., and Wach, P. (1990) APLUSIX: a learning environment for student acquisition of strategic knowledge in algebra. Actes de PRICAI’90. Nagoya.

Nicaud, J.F. e.a. (1994) The APLUSIX project: a computer-aided teaching of algebra. Methodology, theoretical foundations, realisations and experiments. CALISCE 1994.

Oliver J. and Zukerman I. (1990) Dissolve: An Algebra Expert for an Intelligent Tutoring System. Proceeding of ARCE. Tokyo.

Papert, S. (1980) Mindstorms. Children Computers, and Powerful Ideas. Harvester, Sussex.

StarOffice V5.1 http://www.sun.com/staroffice

Thompson, P. W. (1987) Mathematical Microworlds and Intelligent Computer Assisted Instruction. Artificial Intelligence and Instruction, Addison Wesley, Reading MA.

Thompson, P. W. (1989) Artificial Intelligence, Advanced Technology, and Learning and Teaching Algebra. Wagner, S. and Kieran, C. (1989) Research issues in the learning and Teaching of Algebra. Lawrence Erlbaum.

Full paper: http://www.sciences.univ-nantes.fr/info/recherche/ia/projets/aplusix/

|

|

|

|

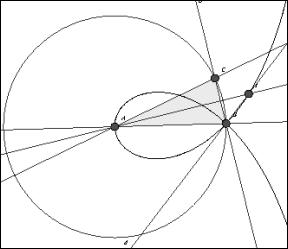

1 Geometric tools and constructions

For thousands of years compass and straightedge (ruler without scale) were the traditional tools of Euclidean geometry. Geometrical objects were points, straight lines, circles, triangles/quadrilaterals and so on. Rulers with scale and protractor became standard tools hundreds of years ago and consequently the length of segments, the size of angles, areas, and coordinates of points have become geometrical objects. Traditionally (in Germany) a student first does the construction and then writes down a description of how they carried out the construction.

In the 80's a type of geometry software became available with which one could do exactly the same constructions as with compasses, ruler and protractor. Constructions were made in an editor by typing special commands. The aim was to construct special triangles with given properties. It was static software. Because there was no real advantage other than being able to have an accurately printed sketch, this geometry software was not successful in the classroom.

In the late 80's and the 90's dynamic geometry software (DGS) was developed (Cabri Géomètre, Geometer's Sketchpad) and began to be used in teaching geometry. Constructions were now done by mouse-clicks on a graphic surface, with the DGS automatically producing a sequence of construction-commands. The aim was now not the construction of special triangles, but the investigation of invariances, functional dependencies and loci.

2 Special qualities of DGS

With DGS one can produce all the constructions which were possible with compasses, straightedge, ruler and protractor. Furthermore new abilities are added by dragmode and loci. This requires a deeper understanding of the construction and the sketch. Two sketches which look identical on the screen can show a completely different behaviour when they are being dragged. 'Drag-stability' becomes an important criterion for the correctness of a construction, something which was not applicable to paper & pencil constructions.

The distinction between drawing(= ‘Zeichnung’) and figure (= ‘Figur’) has been formulated for DGS by Parzyzs and Strässer, but it is was completely clearly formulated by Strunz (1968), who pointed out “die Idealität der geometrischen Figur, die eben doch etwas anderes ist als eine bloß retouchierte visuell wahrnehmbare Zeichnung” (= the ideality of the geometric figure, which is other things than a simply touched up visual perceptible drawing). In the dragmode, as can be seen on the screen instantly, the drawing can be changed. Hence the drawings represents the basic geometric relationships; it can be seen as the class of all drawings with the same properties and the same relationships. It will not be changed by dragging. The aim of constructing is not to construct a special sketch, but to construct a figure and to investigate its properties, to examine what changes and what remains invariant. The figure is represented in the DGS by a sequence of construction-commands or a construction-program. In the dragmode this construction-program will start again and again with varied inputs.

Furthermore a deeper understanding of degrees of freedom (= 'Freiheitsgrad') and of geometrical dependencies is necessary. We have several kinds of points: a free base point has two degrees of freedom and can be moved at will in the plane / on the screen. A point on an object has one degree of freedom and can be moved on a straight line or on a circle and in some DGS can also be moved on a conic or other figures. An intersection point has no degree of freedom; it can be moved only indirectly by dragging the base objects. Similarly several kinds of line-segments and circles exist.

In the dragmode it is possible to measure length, angles or areas dynamically and it is possible to calculate with these dynamic measures and to make further dynamic constructions using these results. This offers new opportunities for finding and/ or testing conjectures!

Examples of special DGS-qualities:

(To return please use the Back-Button)

3 Formal proofs

For more than 2000 years the teaching of geometry has been influenced by formal proving following Euclid. A proof is made line by line, drawing logical conclusions from conditions and known theorems, without consideration of concreteness. This is the way scientific results are normally presented, but it is well known that it is not the way students learn geometry. Asked the classical question "Wie lernt jemand das Beweisen?" (= How to learn proving?) most people give the classical answer "Indem man ihn Beweise lehrt" (= by teachìng proofs) and "und sie ist, wie man weiss, untauglich." (= but it is - as we know – of no use.) (Freudenthal 1979).

Learning is building and organising knowledge in the mind and it happens in an individual way. Individual hands-on learning is fundamental for this. The works of Piaget and Bruner are crucial for the understanding of the learning process. Following Piaget it is assumed, that 11-12 year-old students (6th - 7th grade) have reached the formal-operative stage which enables them to abstract and to engage in abstract and formal thinking. Studies in the 70's showed however that only 20 – 30 % of students in 10th grade in German and American secondary schools had reached the formal-operative stage (In: Hannapel 1996).

This leads to the question of whether school demands abstract and complex thinking too early and whether teachers should not rather remain on a level of concrete examples before moving on to abstract concepts.

4 Preformal proving

In the didactic literature several less formal approaches have been suggested in past decades.

Polya (1954): plausible reasoning

Polya (1954): plausible reasoning

Gardner (1973): ‚look-see’-diagrams

Gardner (1973): ‚look-see’-diagrams

Bender (1989): anschauliche Beweise (= graphic proofs)

Bender (1989): anschauliche Beweise (= graphic proofs)

Wittmann/ Müller (1988): inhaltlich-anschauliche Beweise (= content-graphic proofs)

Wittmann/ Müller (1988): inhaltlich-anschauliche Beweise (= content-graphic proofs)

Blum/

Kirsch (1989): handlungsbezogene Beweise; präformale Beweise

Blum/

Kirsch (1989): handlungsbezogene Beweise; präformale Beweise

(= action-orientated proofs, preformal

proofs)

Nelsen (1993): Proofs without words

Nelsen (1993): Proofs without words

The movement away from Euclidean formalism can be traced back to Clairaut in the 18th century! Inevitably the question of correctness, of universal validity is brought up by graphic, preformal proving. The answer is to interpret the images as drawn reports of actions:

„Die Allgemeingültigkeit ergibt sich aus der Durchführbarkeit der Handlungen und nicht aus der Möglichkeit der visuellen Darstellung. Die Handlung ist es, die das Besondere mit dem Allgemeinen verbindet“

Universal validity results from the possibility of the realization of actions and not from the possibility of visual representation. The action connects the special case with the general. (Kautschitsch 1989).

In the mind of the students a sequence of moving images has arisen on the basis of a single sketch. In this way a drawing is not to be seen as a single image, but as a representative of a class of images, of a figure.

The visual and dynamic abilities of interactive DGS with dragmode and loci offer new approaches to teaching and learning geometry. Geometrical theorems can be detected and proved in a preformal, visual-dynamic way. Actions - formerly done with real objects or only done in mind - take place in the dragmode on the screen. This creates an intermediate step between the (often only partially practicable) actions with real objects and the abstract action going on in the mind. So the students can build mental images, or ‘movies’ in their minds based upon their own actions on the screen.

Thus preformal proofs gain a special, new quality by using DGS. I called them visual-dynamic proofs (Elschenbroich 1999). They are

visual: graphic, related to a drawing,

visual: graphic, related to a drawing,

dynamic: not a single, static sketch, but an ideal sketch, a figure,

which becomes alive through the dragmode of DGS,

dynamic: not a single, static sketch, but an ideal sketch, a figure,

which becomes alive through the dragmode of DGS,

proof: an answer to the question ‘why’, which cannot be questioned,

cannot be rocked by rational argumentation.

proof: an answer to the question ‘why’, which cannot be questioned,

cannot be rocked by rational argumentation.

A visual-dynamic proof is holistic; it includes the theorem and the reasoning. In addition the situation can be remembered better by connecting a problem with an image.

5 Visualization

Pestalozzi was the first to stress the role of graphics in the learning process, but he confined his notion to images and to perception. The importance of actions in the process of forming brain structures was realised as late as in the 20th century by Piaget and Bruner.

Today visualization is still commonly used as a synonym for ‘illustration’ only. A didactical visualization (Boeckmann 1982), however, does not only stress the visual sense but also considers an individual’s action.

Visualization in that sense is

- „Anleitung zur und Ermöglichung von (geistigen) Tätigkeiten durch Bereitstellung von geeigneten Handlungssituationen und Handlungstätigkeiten“

- a guide to and a facilitator of (mental) processes by offering appropriate situations in which to act (Dörfler1984 ).

It should be mentioned that there is also a fundamental difference between visualization and multimedia animation. Understanding such animations is a task of its own. Therefore learners with a poorly developed sense of space often cannot use them appropriately. In addition, viewers remain passive and do not engage in an active learning process.

Examples of visual-dynamic working:

(To return please use the Back-Button)

|

|

||

|

|

||

|

|

||

|

|

||

|

|

||

|

|

6 Electronic Worksheets

Tasks starting with a ‘blank sheet’ proved to be too lengthy and susceptible to mistakes (incomplete constructions, or the wrong kind of points, lines or circles). Even slight changes in the construction lead to undesired and damaging effects. The construction was no longer stable or did not behave as desired. It took a long time until students could engage in meaningful mathematical activities with their individually constructed worksheets.

There was also a conflict between mathematical aims and computer science aims, because a certain way of geometrical programming was demanded.

In grappling with these problems, the concept of electronic worksheets was born (Schumann 1998, Elschenbroich/ Seebach 1999). By offering given constructions a stable working platform was created. Additional information and tasks were integrated in textboxes on the sheets. The concept is now: From constructing figures ... to working with figures.

This gives teachers more time in the classes and more security. In addition to that a stable basis for hands-on-learning is created. It is important here, to offer tasks with the right scope.

Examples of electronic worksheets

(Cabri II essential. Please close the Cabri-window before starting the next worksheet!):

7 Interactivity and the Internet

A weak point of the electronic worksheets is the lack of the ability to add hints and to have the students evaluate their work themselves. More and more DGS however offer ways of presenting sheets in a web-context. Some DGS are completely integrated in the web, some offer the opportunity to construct in the web-context (Cinderella), others offer Internet-Viewer for their files (Cabri, Geometers Sketchpad). In this way the web-browser becomes a learning environment.

Additional information can now be offered via links; hints can be read when necessary. The electronic worksheets become (more) interactive.

However, students' self evaluation is still a problem. In most programs the teacher can give the solution by a link, but the students can read this without having worked at the exercise! Thus the learning process can be negatively influenced.

It is highly desirable, that DGS offers an automatic control of the students work. Cinderella has already made a great step into that direction, using the integrated property checker.

I believe that this is a task for this decade for the other DGS and I think, that in the next years most didactic progress will be made in this field, creating electronic interactive worksheets and that the web will become a universal learning environment.

8 Examples of electronic interactive worksheets

|

|

(Chair of the Bride) |

|

|

|

||

|

|

||

|

|

||

|

|

(with automatic control of the result) |

Bibliography

Bender, P. (1989) Anschauliches Beweisen im Geometrieunterricht - unter besonderer Berücksichtigung von (stetigen) Bewegungen und Verformungen. Kautschitsch/ Metzler: Anschauliches Beweisen. Hölder-Pichler-Tempsky, Wien.

Bishop, A. J.(1981) Visuelle Mathematik. Steiner/ Winkelmann (Hrsg): Fragen des Geometrieunterrichts. IDM 1. Aulis, Köln.

Boeckmann, K. (1984) Warum soll man im Unterricht visualisieren? Theoretische Grundlagen der didaktischen Visualisierung. Kautschitsch/ Metzler: Anschauung als Anregung zum mathematischen Tun. Hölder-Pichler-Tempsky, Wien.

Clairaut, A.-C. (1773) Des Herrn Clairaut Anfangsgründe der Geometrie. Aus dem Französischen übersetzt von F. J. Bierling. Christian Herolds Witwe, Hamburg.

Davis, Ph. J. (1994) Visual Geometry, Computer Graphics, and Theorems of the Perceived Type. The Influence of Computing on Mathematical Research and Education. Proceedings of Symposia in Applied Mathematics, 20.

Davis, Ph. J. (1993) Visual Theorems. Educational Studies in Mathematics 24.

Dörfler, W.; Fischer, R. (ed.) (1979) Beweisen im Mathematikunterricht. Hölder-Pichler-Tempsky, Wien.

Elschenbroich, H.-J. (1997) Dynamische Geometrieprogramme: Tod des Beweisens oder Entwicklung einer neuen Beweiskultur? MNU 50(8).

Elschenbroich, H.-J. (1999) Visuelles Beweisen - Neue Möglichkeiten durch Dynamische Geometrie-Software. Beiträge zum Mathematikunterricht 1999. Franzbecker, Hildesheim.

Elschenbroich, H.-J. (2000) Neue Ansätze im Geometrieunterricht der SI durch elektronische Arbeitsblätter. Beiträge zum Mathematikunterricht 2000. Franzbecker, Hildesheim.

Elschenbroich, H.-J. (2001) DGS als Werkzeug zum präformalen, visuellen Beweisen. Elschenbroich/ Gawlick/ Henn: Zeichnung - Figur - Zugfigur. Franzbecker, Hildesheim.

Elschenbroich, H.-J.; Seebach, G. (2000) Dynamisch Geometrie entdecken. Elektronische Arbeitsblätter mit Cabri Géomètre II. Klasse 7/8. Dümmler, Köln.

Freudenthal, H. (1986) Was beweist die Zeichnung? mathematik lehren Heft 17.

Freudenthal, H. (1979) Konstruieren, Reflektieren, Beweisen in phänomenologischer Sicht. Dörfler/ Fischer: Beweisen im Mathematikunterricht. Hölder-Pichler-Tempsky, Wien.

Gardner, M. (1973) Mathematical Games. Scientific American, October 1973.

Hannapel, H. (1996) Lehren lernen. Kamp Schulbuchverlag, Bochum.

Handschel, G. (1988) Eine Ausgangsbasis für das Beweisen im Geometrieunterricht der Sekundarstufe I. MNU 41/ 7.

Heintz, G. (2000) WWW-basierte interaktive Arbeitsblätter für den Geometrie-Unterricht. Beiträge zum Mathematikunterricht 2000. Franzbecker, Hildesheim.

Kautschitsch, H. (1989) Wie kann ein Bild das Allgemeingültige vermitteln? Kautschitsch/ Metzler: Anschauliches Beweisen. Hölder-Pichler-Tempsky, Wien.

Kautschitsch, H./ Metzler, W. (ed.) (1984) Anschauung als Anregung zum mathematischen Tun. Hölder-Pichler-Tempsky, Wien.

Kautschitsch, H./ Metzler, W. (ed.) (1989) Anschauliches Beweisen. Hölder-Pichler-Tempsky, Wien.

Kautschitsch, H./ Metzler, W. (ed.) (1984) Anschauung als Anregung zum mathematischen Tun. Hölder-Pichler-Tempsky, Wien.

Kirsch, A. (1979) Beispiele für 'prämathematische' Beweise. Dörfler/ Fischer: Beweisen im Mathematikunterricht. Hölder-Pichler-Tempsky, Wien.

Kusserow, W. (1928) Los von Euklid! Dürr'sche Verlagsbuchhandlung, Leipzig.

Malle, G. (2000) Zwei Aspekte von Funktionen: Zuordnung und Kovariation. mathematik lehren Heft 103.

Nelsen, R. B. (1993) Proofs Without Words. Exercises in Visual Thinking. The Mathematical Association of America, Washington.

Parzysz, B. (1988) 'Knowing' versus 'seeing'. Problems of the plane representation of space geometry figures. Educational Studies in Mathematics 19.

Polya, G. (1954) Mathematics & Plausible Reasoning. Princeton University Press.

Schumann, H. (1998) Interaktive Arbeitsblätter für das Geometrielernen. Mathematik in der Schule 36, Heft 10.

Strunz, Kurt (1968):Der neue Mathematik-Unterricht in pädagogisch-psychologischer Sicht. Quelle & Meyer, Heidelberg, 118.

Dynamic notions for Dynamic Geometry

Thomas Gawlick

Vechta, Germany

1. Dynamic problems with basic examples

2. On principles of Dynamic Geometry

3. Towards a theory of Dynamic Geometry

4. Advanced examples – The dynamic interplay between geometry and algebra

1 Dynamic problems with basic examples

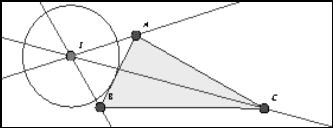

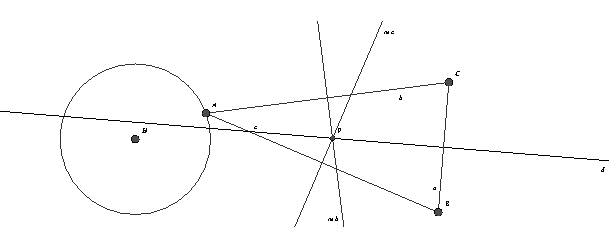

It is expected that students will discover geometrical properties as invariances by exploring constructions in drag-mode. For instance, Elschenbroich (2001) has presented a well-considered concept for interactive electronic worksheets. However, even some of the most elementary constructions show an unexpected behaviour:

Example 1

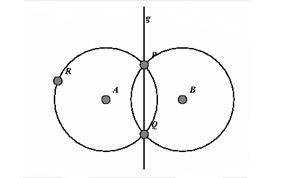

Figure 1a shows the construction of the perpendicular bisector g = PQ of segment AB by two circles of equal radius r. When dragging A, the intersection points P and Q of the circles will vanish for d (A, B) > r. However, "Cinderella" leaves g in its place (Figure 1b)!

|

|

|

|

|

Fig. 1a |

Fig. 1b |

Example 2

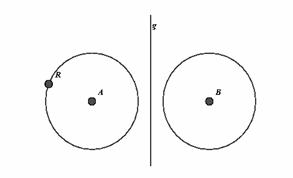

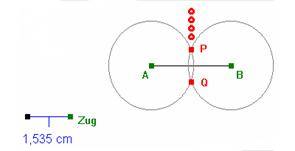

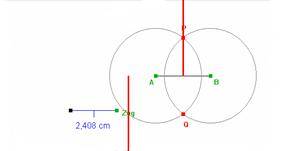

Dragging Zug changes the radius of the circle, Fig.2a. Thereby, students shall discover that the perpendicular bisector is the locus of equidistance to A and B. However, when students draw the locus of P by dragging Zug, the "Euklid" version of the interactive worksheet shows a surprising jump, Fig. 2b

|

|

|

|

|

Fig. 2a |

Fig. 2b |

Example 3

In a later stage, students shall discover the circumcentre by observing the behaviour of the intersection point P of two perpendicular bisectors. In trying this with "Cinderella", it is firstly annoying that one cannot trace the movement of P when dragging A freely – instead, one has to draw the locus of P when A varies along a curve, for instance a circle. But then it is confusing (if not misleading!) that the drawn locus is the whole perpendicular bisector - whereas dragging A reveals that P covers only a small part of it; Fig. 3.

|

|

Fig. 3 |

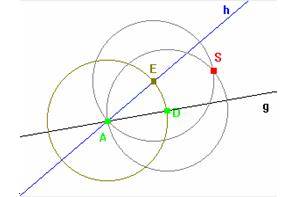

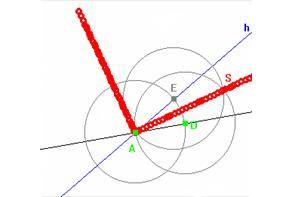

Example 4

In order that students come to know the angular bisector as equidistant locus of the two sides, they shall vary the radius in the three-circle-construction by dragging D (figure 4a). However, when D passes through the vertex, S deviates orthogonally from the expected path, as is shown by the locus of D in Figure 4b. ("Euklid", "Cabri").

|

|

|

|

|

Fig. 4a |

Fig. 4b |

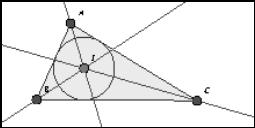

Example 5

In Figure 5a, by dragging the vertices of the triangle students may discover that its angular bisectors meet inevitably in one point I. However, it may be considered harmful (especially with regard to the interpretation of I as incentre), that dragging vertex B through A lets “Cinderella” move I out of the triangle.

|

|

|

|

Fig. 5a |

Fig. 5b |

The list of examples may well be continued. Especially the last one, which occurred in classroom during an empirical study, is apt to put the teacher into real difficulties (in the observed situation, these where “solved” by reloading the worksheet). Of course, one is driven to the Question: Are these contra-intuitive effects due to

flaws of the software?

flaws of the software?

flaws of the construction?

flaws of the construction?

flaws of our notions?

flaws of our notions?

The latter turns out to be the case in some important aspects! This fact underlines strongly the necessity for a theory of dynamic geometry.

2 On principles of Dynamic Geometry

The unwanted behaviour of intersection points in our examples suggests to postulate the

Continuity Principle: DGS should move objects continuously in drag mode.

Also, pitfalls like the “extra triangular” incentre prompt the demand for the

Determinism Principle: For any position of the base points there should be only one position of the constructed elements.

So, why not just stick to a DGS that conforms to these principles? But unfortunately these desirable principles are mutually exclusive! In fact, we shall later prove the

Exclusion Theorem: A continuous DGS cannot be deterministic.